Technology, Not Climate, Will Determine the Future of our Food System

That’s why R&D funding is more urgent than ever

-

-

Share

-

Share via Twitter -

Share via Facebook -

Share via Email

-

In 1968, the American scientists Paul and Anne Ehrlich published The Population Bomb. In it, The Ehrlichs foresaw widespread death and despair as global populations—especially in the lower-income countries of South Asia and Sub-Saharan Africa—grew beyond the “limits” of global agricultural output.

The Ehrlichs were wrong. Populations continued to expand over the second half of the twentieth century, but so did agricultural output. And while global temperature has increased by over a degree celsius since 1970—a reality that the Ehrlich’s would likely have suggested would lead to even worse famines—the past half-century has been the most food secure in recorded human history.

As incorrect as he might have been in 1968, Paul Ehrlich has remained an influential voice for environmentalists, population control advocates, and doomsayers who make similar predictions about humanity’s inability to continue to feed itself. On the 50th anniversary of The Population Bomb in 2018, Ehrlich restated his lifelong thesis, now with the addition of climate change, arguing in the academic journal Sustainability that “overpopulation” threatened to trigger future starvation.

While Ehrlich’s focus on population control as a solution to the supposed limits on global agricultural output has gone out of fashion, the most strident climate activists still like to warn of impending starvation at the hands of future climate change. Like Ehrlich, these activists point to climate change modeling that shows declining agricultural yields due to temperature increases, drought, and extreme weather as evidence of their claims. But these claims miss an important point—the same one that Ehrlich failed to recognize and that most major studies pointing to impending disaster ignore, too.

Although climate change may very well reduce agricultural productivity compared to a world without climate change, there is no reason to believe that impact can’t be completely negated due to technological developments.

For many activists—and some scientists and modelers—letting go of the narrative that climate change will lead to starvation may seem dangerous. Without it, the pressure on governments, corporations, and individuals to respond appropriately to climate change may seem diminished. But these false premises are unnecessary to motivate proper responses to climate change when there is an already-long list of real and legitimate reasons to act on climate change. They equally risk attracting investment and focus to low-priority areas of climate mitigation or adaptation at the expense of more pressing, and more scientifically certain challenges, brought about by both climate change and the plethora of other drivers of food insecurity—geopolitical, socioeconomic, and more.

Getting policy right requires clearly articulating the challenges and needs related to climate mitigation and adaptation. For agriculture, that means investing in and prioritizing increasing agricultural productivity globally through the development and adoption of new and proven technologies and practices where productivity lags. Mitigating the climate impacts of agriculture will be crucial; food production and consumption is responsible for roughly one-quarter to one-third of global emissions. But so, too, will be making sure that agricultural productivity growth continues, and that society does not give in to false millenarian models of agricultural decline.

Climate Change’s Impact on Yields

There have been hundreds of studies and thousands of simulations published in the last several decades on the effects of climate change on agriculture. These studies encompass a very wide diversity of data sets, methods, models, predictor variables, and target variables. The most common study design looks at measures of temperature and precipitation over the growing season and their relationship with yields (e.g. tons per acre) of staple crops like maize, rice, soybean, and wheat.

A major challenge for understanding the influence of environmental conditions on yields is that the variation caused by long-term change in these conditions can be overwhelmed by that caused by technological and socioeconomic factors. Thus, studies attempting to quantify the impact of climate change on agricultural productivity must tease out the relationship in a context of many other confounding factors that can have a much larger influence.

Studies take a range of approaches to deal with these challenges, and find a large range of results. One major global study that looked at crop yields from 1974 to 2008 indicated a wide range of responses of yields to climate change depending on the location and the crop but found a global net 1% decrease in consumable food calories of 10 major crops relative to a hypothetical world without climate change.

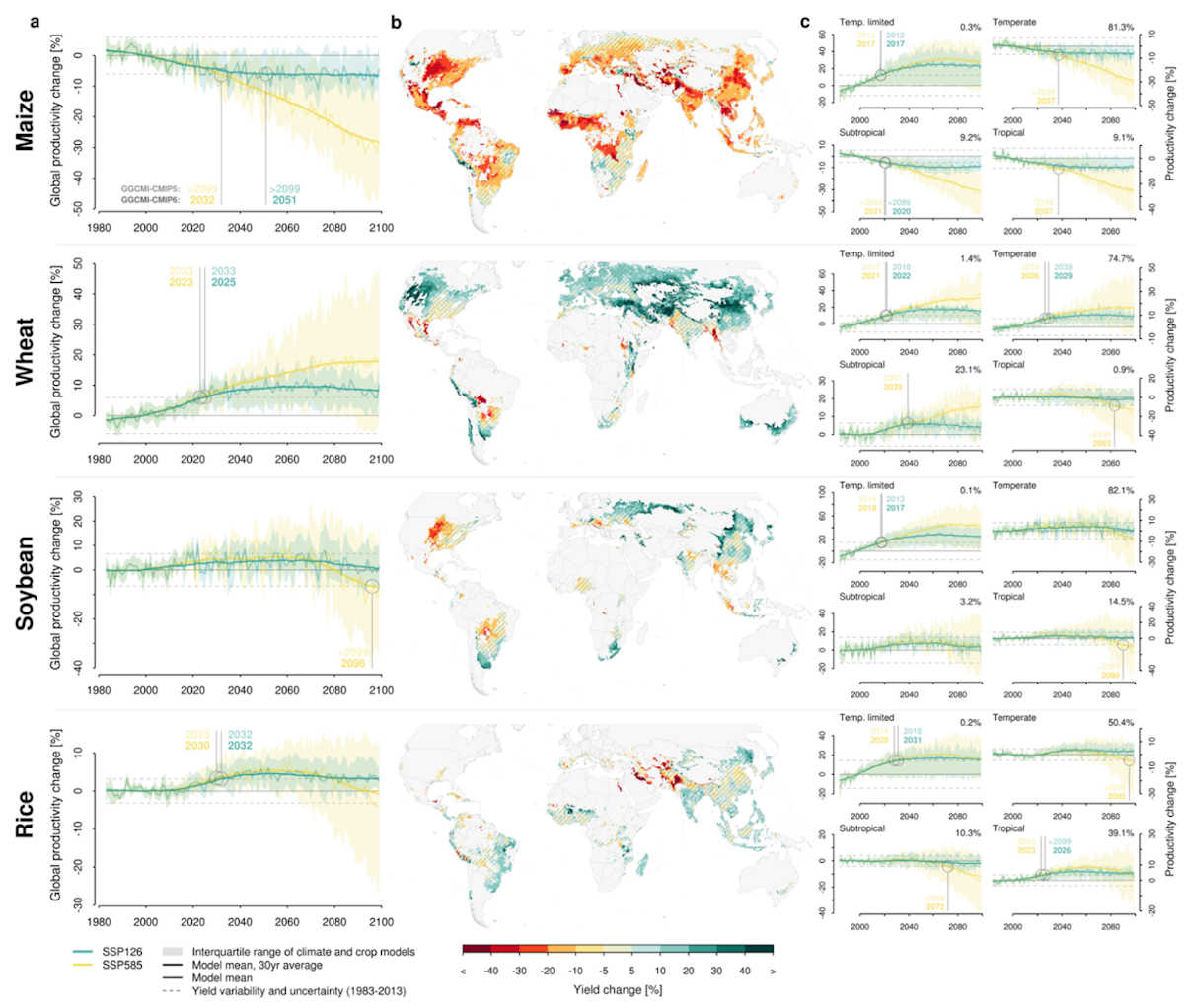

Another recent major study, though, indicated that a range of potential warming and enhanced CO2 levels will actually increase global wheat, rice, and perhaps soybean yields and decrease maize yields (See Figure 1).

Figure 1: Projections of Global Crop Productivity for the 20th Century

Most studies, however, tend to find that, on a global level, climate and CO2 changes are detrimental to yields. Meta analyses synthesized by the IPCC report that, since 1960, climate and CO2 change have decreased yields for wheat by 4.9%, maize by 5.9%, and rice by 4.2% relative to a hypothetical world without climate change since 1960.

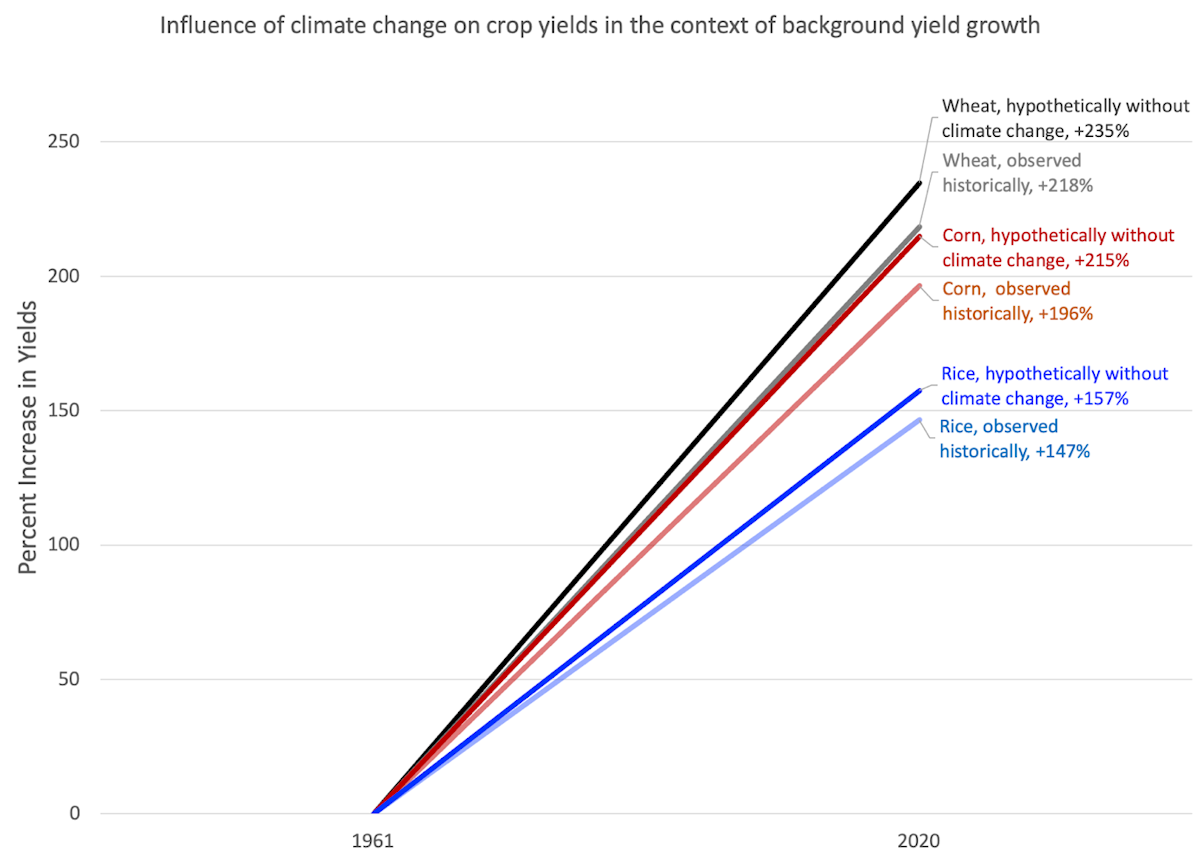

But even these estimated impacts look small when you place them in the context of large background yield growth (See Figure 2).

Figure 2: Influence of climate change on crop yields in the context of background yield growth 1961-2020

Agricultural Productivity Growth in the Face of Climate Change

Over roughly the past half-century, the world has warmed about 1 degree Celsius, and yet global agricultural output has increased almost four-fold. Global total factor productivity, a measure of overall efficiency (i.e., agricultural output divided by total inputs like fertilizer) increased by 79% over the same period. And while global land-use for agriculture increased by 25%—from just over 1.7 billion hectares in 1970 to just under 2.2 billion hectares today—without the increased yields made possible by technological advancement, a further 3.1 billion hectares of land could have been converted. In other words, even with small productivity losses compared to a hypothetical world without climate change, yield gains during that period saved just under a quarter of the world’s total arable land from agricultural expansion.

The productivity gains that prevented further agricultural land expansion were mainly due to the dramatic increases in agricultural yields since 1961. For example, global maize yields increased by 196%, wheat by 218%, and rice by 147% (Figure 2).

Productivity gains didn’t just save land, they saved people. Increased global agricultural output has meant decreased food insecurity. Global per capita calorie availability—a measure of total food supply—has risen from 2357 kcal per day in 1970 to 2947 kcal in 2020, a 25% increase. Since 1970, according to the FAO, there has been a decline in the prevalence of hunger in lower-income countries by almost two-thirds; whereas 34% of the global population faced hunger in 1970, just 13% did in 2015. From 1870 until the 1970s, famines killed approximately 900,000 people each year, while from the 1980s onwards, the average annual death toll of famines decreased to about 75,000 people each year.

While the increase in global temperature over the past half-century did cause decreases in agricultural productivity, the net productivity growth over that period was nothing less than massive. The fact that the detrimental impact of global warming has been totally overwhelmed by technological and socioeconomic advances leads to a predicament for making projections going forward. The easiest and cleanest study design is one where the multifactorial impacts on yields are held constant except for climate change. The benefit of this is that a researcher does not have to speculate on unpredictable socioeconomic dynamics that affect the adoption of current best practices or attempt to predict future technological advancement.

However, there is no reason to believe that technological and socioeconomic forces will suddenly stop impacting agricultural yields, or that the many other factors that come to bear on hunger—geopolitics and economic interdependencies, for example—will become unimportant.

Studies like the UN Food and Agricultural Organization’s Future of Food and Agriculture (FOFA) analysis have attempted to account for these multifactorial impacts. FOFA looks at climate change as one of eighteen “critical drivers” of agricultural transformation to appraise future food system scenarios. Accounting for these factors, the FAO projects a business-as-usual 50% increase in global agricultural production by 2050.

Some might be tempted to think that the problem is, then, solved. But here, a look at the historical drivers of agricultural productivity and yield growth points to some real challenges.

The past half century’s gains were mainly due to massive productivity growth in places like the United States, Western Europe, and parts of South America, East Asia, and South Asia. While agricultural yields and productivity have increased in Africa, Central Asia, and other relatively low-productivity agricultural regions, growth rates have been slower, and the starting points were lower.

For example, while North American agricultural total factor productivity approximately doubled between 1970 and 2020, Sub-Saharan Africa’s agricultural productivity only saw a roughly 16% increase. According to USDA data, that figure has declined slightly since 2015.

Similarly, whereas global average per capita calorie availability has increased to match North American food supply of 1970, Africa today has a per capita caloric availability—about 2500 kcal—closer to the 1970 global average. And while global hunger, measured more broadly, has decreased precipitously since 1970, food insecurity and malnutrition remain critical issues in regions with lower agricultural productivity. A 2022 UN World Food Programme report highlighted 19 “hunger hotspots,” all of which were either regions currently in conflict or where agricultural productivity has been relatively stagnant.

Catching up to 20th Century Agriculture: Mechanical, Irrigated, Fertilized

In the United States, as well as in many other high-income countries, the middle decades of the twentieth century saw world-beating productivity growth. The adoption of labor-saving mechanical equipment – tractors, reapers, and the like – drastically reduced the need for farm labor inputs, while increasing outputs. The use of newer chemical inputs – like fertilizer and pesticides – in the decades immediately following World War II drove drastic crop yield increases. And the large-scale consolidation of farmland, matched by increasing rates of farm specialization altered the agricultural landscape, increasing output and allowing farmers to capitalize on economies of scale.

At the same time, the broader societal, technological, and economic changes that occurred in the years prior to 1970 in higher-income countries – namely electrification, massive infrastructure construction in the form of highways and railways, and large-scale water projects, to name a few – had significant benefits for agricultural productivity growth.

Outside of the U.S., and higher-income countries more broadly, agricultural productivity followed numerous paths over the past half-century. In India, for example, “green revolution” technologies like synthetic fertilizer and hybrid crops drastically improved crop yields but have been criticized for homogenizing Indian agriculture and forcing agricultural laborers off farmland without other options for gainful employment. Countries of the former Soviet Union, like Russia and Ukraine, have taken advantage of fertile soils and large-scale mechanization to increase yields – especially for grain production – without drastically increasing fertilizer use per acre.

For many lower-income countries, agricultural productivity growth came from the uneven adoption of technological equipment and new processes. Many farms in Sub-Saharan Africa, for example, are not irrigated, do not use mechanized equipment, and use only very small amounts of chemical inputs. At the same time, in lower-income countries, agricultural land ownership has remained relatively decentralized, meaning that small-holder farmers have remained the primary user of agricultural land. This limits the capital intensity of agriculture and keeps farms less specialized, as many smallholders produce food for subsistence and for local trade.

Irrigation can allow expanded cultivation in countries with little rainfall and can allow farmers to supplement water from rainfall in dry years, lessening or avoiding crop loss due to drought stress. Irrigated agriculture is twice as productive, on average, as rainfed agriculture, and irrigation has been an important driver for increasing crop yields in many countries. Despite these advantages, irrigated agriculture makes up only 20% of global cultivated land, with uneven distribution. Sub-Saharan Africa, for example, has the lowest percentage of irrigation on arable land at 4%, while Latin America has 14% irrigated land, and Asia boasts 37%.

Although irrigation can increase agricultural productivity, it can also contribute to water scarcity. However, high-efficiency targeted irrigation technologies like drip lines can dramatically reduce water wasted due to inefficient application. At the same time, regular maintenance of existing irrigation systems is necessary to reduce water wasted due to leaks. Globally, agriculture accounts for 70% of all freshwater withdrawals, and water-saving irrigation technologies are especially important in regions with high levels of water stress, such as the Middle East, North Africa, and South Asia.

But irrigation often cannot simply be the choice of individual farmers. Building out the necessary infrastructure to increase the number of acres irrigated across lower-income countries requires government intervention, and risks creating political and social tension about water projects—for example, dam construction—and water use.

More broadly, for farmers in lower-income countries to adopt the many existing technologies and practices that make modern agriculture so productive will require government intervention, flexible financing, and the right incentives. For example, while almost all farms are mechanized in places like the United States, tractors and other labor-saving and productivity-boosting mechanical equipment remains underutilized in lower-income countries. In 1900, farmers in the United States relied on 21.6 million work animals; by 1960 (the last year work animal data was collected by the U.S. Census) only 3 million work animals were being used by American farmers, who had turned instead to 4.7 million tractors. Today, few farms in the United States do not use mechanized equipment.

In comparison, smallholder farmers in lower-income countries use tractors and other mechanical equipment at a much lower rate, relying instead on human and animal labor. In 2012, only 2% of Kenyan farmers used tractors, a decline from 5% in 1992. The use of oxen increased over the same period from 17% to 32% of Kenyan farms. Similarly, only 4% of Nigerian farmers used tractors in the rainy seasons between 2010 and 2012. Increasing the uptake of mechanical equipment will be crucial to increasing productivity and yields by reducing labor and improving the timeliness of farm operations, though the impact of cutting farm laborers out of the agricultural economy must be considered.

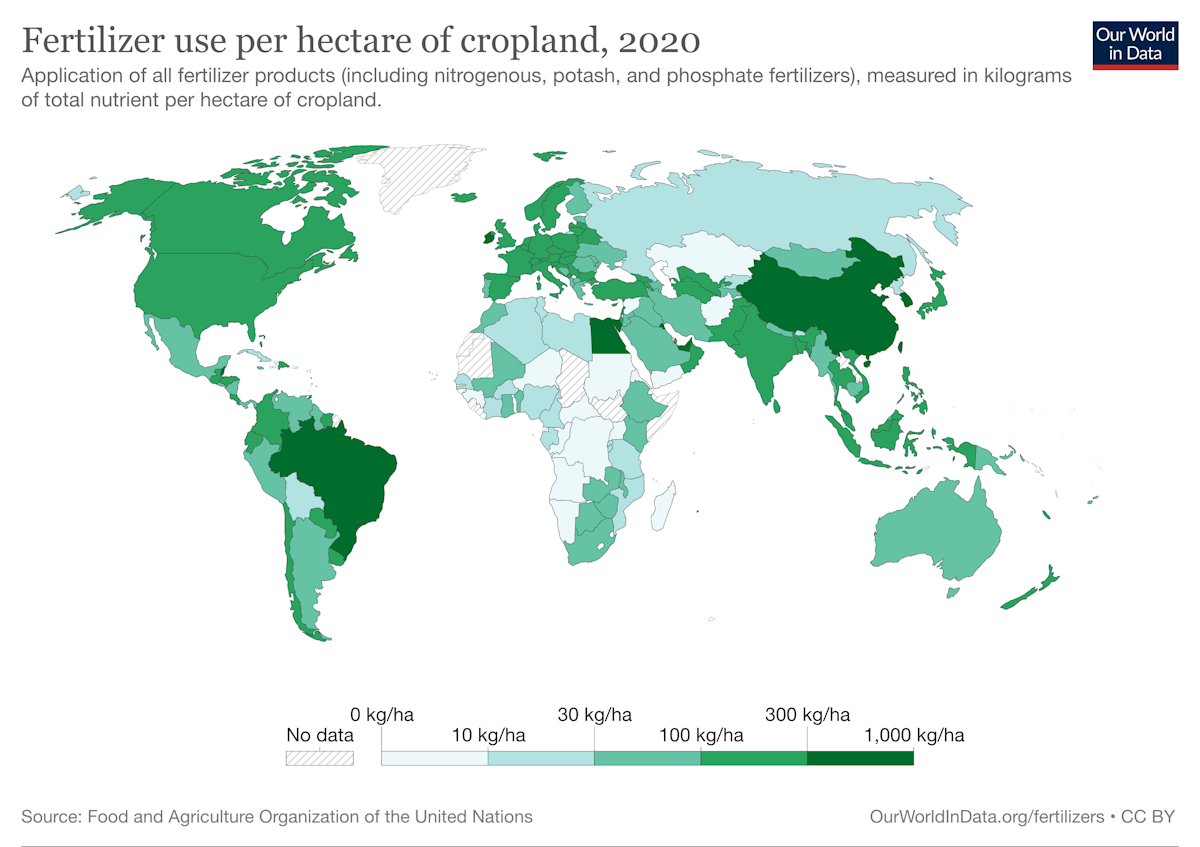

There is a similar uneven distribution of productivity-enhancing technologies and practices when it comes to chemical inputs. Agricultural producers in high-income countries tend to use significantly more nitrogen and other fertilizers than producers in lower-income countries (Figure 3). For example, while American farmers used an average of 124 kg of fertilizer products per hectare of cropland in 2020, every Sub-Saharan African country, except for Zambia, used less than half of that amount. Countries like the Democratic Republic of Congo, Congo, Niger, and Mali all used less than 10 kg of fertilizer products per hectare in 2020.

Figure 3: Fertilizer use per hectare of cropland, 2020

Efforts to increase fertilizer use in Sub-Saharan Africa have been effective at improving yields, but they have been piecemeal due to the high cost of fertilizer. In Nigeria, a fertilizer subsidy program increased yields by 38% between 2010 and 2013 for participating farmers, but cost a significant portion—as much as 26%—of the Nigerian agricultural budget. A similar program in Malawi from 2005 to 2009 increased yields by 42% for producers on average, but cost approximately 16% of the total government budget.

For mechanization and fertilizer use—as well as the myriad other agricultural technologies and practices that remain geographically uneven, like modern pesticides—increasing adoption in lower-income countries is not a simple, no-consequence decision. Mechanization of agriculture reduces the need for labor, effectively cutting some farm laborers out of the agricultural economy. Fertilizer use could have the same consequences and could lead to significant ecological harms if fertilizer is indiscriminately or irrationally used. Broadly speaking, simply transporting technologies and practices from one context to other risks missing local contexts. While the United States and other high-productivity agricultural regions can provide good examples of different pathways to increasing yield and overall productivity in agriculture, the comparisons cannot and should not provide an exact roadmap of technological adoption and agricultural evolution.

In fact, the United States equally provides an example of how not to industrialize agriculture. Nutrient run-off, the marginalization of rural communities, and the monopolization of agricultural input and processing industries are prices that the United States paid for a high-productivity system. Ideally, recognizing these tradeoffs can mean that agricultural productivity growth elsewhere can benefit from the technological breakthroughs that allowed for high U.S. yields, without all the negative tradeoffs that currently define U.S. agricultural production.

Biotechnology and the Biological Revolution

A central pathway to increasing productivity growth and global agricultural yields is crop genetic improvement. But, few technologies have received the extent of criticism as agricultural biotechnologies. Employing genetically modified and gene edited crops to improve yields globally will require building trust, achieving buy-in from producers and consumers, and moving beyond punitive intellectual property laws. And yet, the necessity of biotechnology to enhance crop genetic improvement is dire.

In the United States, crop genetic improvement has been responsible for about half of historical productivity gains in maize, soybeans, and wheat. By some estimates, further improvements in plant breeding could reduce global agricultural GHG emissions by almost 1 GT CO2e/year by 2050, mainly by increasing crop yields. This estimate includes genetic improvement through conventional plant breeding; newer assisted breeding technologies like marker-assisted breeding and genomic selection; and biotechnology, which is an umbrella term that covers technologies including genetic modification, genetic engineering, GMOs, gene-editing, and CRISPR.

Historical yield gains due to genetic improvement before the 1990s were mainly due to conventional breeding. Conventional breeding—usually defined as crossbreeding, selection, and radiation and chemical mutagenesis—created higher-yielding, stronger, and shorter wheat plants that can hold more grain, and hybrid maize that grows well at higher planting densities. For example, the shift to hybrid maize—which started around 1930—improved yields, with 16% of maize yield gains in Minnesota between 1930 and 1980 due to the transition to hybrid seeds, and 43% to other breeding improvements.

Scientists started using genetic modification (sometimes referred to as genetic engineering or transgenesis) in crop plants in 1983, and the initial planting of genetically modified insect-resistant and herbicide-tolerant crops started around 1995 in the United States. Genetic modification allows for the introduction of a trait from one plant or non-plant species into a crop plant of another species. One global meta-analysis found that between 1995 and 2014, genetically modified insect-resistant and herbicide-tolerant crops had 22% higher yields and 37% lower pesticide use, on average, than non-genetically modified varieties. These benefits are on average greater in low-income countries where existing pest- and weed-management systems are less effective and the potential for improvement is higher. Yield gains are still significant, albeit smaller, in high-income countries. Genetic modification has also been used to create drought-tolerant wheat, which can increase crop yields under stressful conditions in drought years.

Gene editing—most recently through CRISPR—allows scientists to make small, precise changes to DNA. These changes can be indistinguishable from the single-letter DNA mutations that have historically been made at random using chemical and radiation mutagenesis in conventional breeding. However, gene editing is much faster. Gene editing is estimated to reduce the time to introduce a new trait to a crop by nearly two-thirds. For example, in order to add a disease resistance trait to a commercial variety of maize, it could take only 2–3 years using gene editing, compared to 7–8 years using conventional breeding. By another estimate, conventional breeding in maize generally takes an average of 9–11 years to introduce a new variety of crop to market; in comparison, gene editing can take just a few years.

The first two gene-edited crops (high-oleic oil soybean and high GABA tomato) were each introduced to the market about 9 years after the initial publication of each gene-editing technology. Gene editing is also being used to make crops drought tolerant or resistant to problematic diseases.

Tools for plant genetic improvement have become increasingly fast, predictable, and precise compared to conventional breeding or even to the first generation of genetically modified crops. Besides genetic modification and CRISPR gene editing, other new tools like marker-assisted breeding and genomic selection have increased the speed of conventional breeding.

Although biotechnology is not the only contributor to crop genetic improvement, it is an increasingly important component. A greater variety of tools provides flexibility for addressing the constant challenges of pests, diseases, and weeds—all of which are central constraints on yield growth—and continuously improving crop productivity. Compared to slower tools like conventional breeding, faster tools like genetic engineering and genome editing enable more rapid improvement.

But scientific breakthroughs in the technologies underpinning crop genetic improvement are useless for improving the productivity and output of global agriculture if high-quality seeds are not available for the millions of producers who need them. Simply, the supply of high-quality seeds—seeds that have ideal genetic traits, a high rate of successful growth, and are disease free—is a crucial contributor to increasing crop yields.

In higher-income countries, large-scale farms rely on high-quality seeds from certified providers. But in lower-income countries with many smallholder farmers, such seeds are often not available or affordable. Smallholders are left to rely on seeds they or other farmers save from previous seasons’ harvests (often called local cultivars or indigenous varieties), which have more variability in quality and higher rates of disease than seeds from certified providers.

For example, hybrid maize seeds contributed to massive yield gains in the United States in the 1930s and 1940s, but are used less widely in lower-income countries. Hybrid seeds are made by breeding two or more plants with different characteristics to create descendant cultivars that are more productive. Importantly, plants grown from hybrid seed will produce much lower-quality seed in the next generation, requiring farmers to repurchase seed each season to maintain a high-quality crop.

One study across 13 countries in Eastern, Southern, and Western Africa showed an average of 37% of maize area cultivated with hybrids, 21% with other higher-quality seed from certified providers, and 43% with seeds saved by farmers. Even among maize seed from certified providers, studies show that hybrid seeds on average yield 18% or 21–43% more than the best performing non-hybrid seed, making up for the difference in annual cost of seed. A 2017 study found that Kenyan farmers that do plant hybrid maize seed are wealthier than farmers that grow non-hybrids.

The cost of seed is not the sole barrier to farmers growing hybrid crops. Weak extension systems, limited access to produce markets, low profit margins for smallholders, on-farm crop diversity that limits economies of scale for individual crops, and farmer loyalty to specific varieties all reduce the likelihood of farmer adoption of hybrid, or otherwise improved, seed. Private sector programs can overcome barriers to farmer adoption of improved seed but require the capacity to fund agricultural improvements through credit and debt systems. For example, the One Acre Fund provides farmers with improved seed and fertilizer on credit, trains farmers in modern agricultural techniques, and facilitates access to markets.

Innovation and The Known Unknown

Increasing global agricultural productivity is not just a question of raising yields in places where they are currently low. Novel technologies and continually improved practices must also play a key role in maintaining or increasing yields in higher-income countries.

Historically, agricultural breakthroughs have benefited immensely from the support of public sector research and development (R&D) funding. Public R&D remains crucially important for the maintenance of agricultural yields, but government spending on agricultural research has slowed down or declined in some major economies. In the United States, public agricultural R&D spending measured in real dollars declined by a third from 2000 to 2020.

Agricultural R&D drives productivity growth by spurring innovation. Increasing global agricultural R&D spending, especially public R&D, will be necessary to increase agricultural productivity globally. Analyses by agricultural economists consistently show the high impact of public agricultural research spending. A 2023 study, commissioned by The Breakthrough Institute, found that roughly doubling U.S. public agricultural R&D would increase agricultural output by 69.5% by 2050—about a two-fifths increase over a business-as-usual approach.

And outside of the need for broader agricultural innovation, there are several emerging technologies today that could raise productivity while reducing environmental impacts. Alternative proteins can produce high quality protein from fewer crop calories than conventional livestock agriculture. Precision agriculture can reduce the need for chemical inputs without limiting crop yield. Feed additives for cattle can improve the efficiency of cattle diets, both reducing methane production and helping cattle grow faster.

These new technologies and improved production practices could be significant sources of productivity growth, but their benefits are not necessarily a given. In many cases, these technologies remain in the process of research and development, and their industries lack both the funding and know-how of scaled manufacturing or deployment.

Conclusion

Ensuring food security is a crucial political and social concern. It is more likely than not that climate change will reduce overall agricultural productivity. However, climate change is very unlikely to be the primary driver of global yield changes for the foreseeable future. Instead, agricultural yield growth and overall productivity will continue to be the product of the many factors that have influenced it to date: government investment in research and development, farm programs, infrastructure, shifts in production location, technologies like crop breeding, farm machinery, and chemical or biological inputs, and much more.

We should certainly be concerned about the impacts of climate change on agriculture and seek to mitigate them. Doing so at the cost of funding other important agricultural efforts, however, would only further threaten the hundreds of millions of farmers and food insecure people across the world that depend on increasing global agricultural yields.